How outline shaders work

The first time I saw outlines on 3D objects in a video game was Ubi Soft’s XIII in 2003. This was the first 3D game I had ever heard of that had a cel-shaded aesthetic. (It was based on a graphic novel.)

Once I saw it, I had to know how it was done. XIII being an Unreal Engine 2 game, I was able to crack open my trusty UnrealEd and poke around.

Unreal Engine 2 used the fixed-function graphics pipeline, meaning there was no shader magic involved in XIII’s thick, ink-like outlines. The outlines were actually a part of each mesh: an outer hull, hovering just off the surface of the character, with inverted face normals so only the interior was visible, covered in a black texture.

We’ve come a long way since 2003 and the fixed-function graphics pipeline, but the way we handle outlines has changed precious little. The biggest change is that outlines no longer need to be a part of the mesh; we can use a vertex shader in a separate pass to render a slightly fattened version of the mesh with front faces culled.

In other words, in our outline pass, we’ll take the normal of the vertex, multiply it by a small number (we don’t want to fatten by a full unit!), and add it to the position of the vertex before transforming it to clip space.

This yields precisely the result XIII was pulling off in 2003 with no shaders whatsoever, but we’ve simplified our authoring workflow by automating the outlining process and offloading the work to the user’s GPU.

But we can do better.

Limitations of the classic technique

For XIII’s graphic novel-inspired aesthetic, these inky outlines are right on the mark. But like any fifteen-year-old, pre-shader technique, they have limitations which make them less useful as a universal outlining solution:

- Outlines vary in thickness over the surface of the object based on shape and viewing angle.

- Outlines undergo foreshortening as objects move away from the camera (when using a perspective projection)

- Outline width is specified in object-space units, not in pixels.

Note that these are limitations, not necessarily problems. Some or all of them may be desirable in certain cases. But each of them should be under our control.

I’m focused on the technique of computer art. When we, as artists, are operating with a high level of technique, we are exerting precise control over the art we produce – that is, the output of our code.

For example, we may want to outline objects as part of our user interface, to signal that they are selected. We’ll probably want these elements to have stable, screen-space widths.

Or we may be mimicking a vector or pixel art style, or mixing 3D meshes with vector or pixel art sprites. We’ll need to be able to match our outline width in pixels.

Over the course of this tutorial, we’ll explore the classic technique, and then evolve it, particularly focusing on how we transform the vertex positions, to be more adaptable to our needs and give us more artistic control over the final look.

Building the classic outline shader

Below is the code for classic, XIII-style outlines as a modern Unity shader.

Shader "Tutorial/Outline" {

Properties {

_Color ("Color", Color) = (1, 1, 1, 1)

_Glossiness ("Smoothness", Range(0, 1)) = 0.5

_Metallic ("Metallic", Range(0, 1)) = 0

_OutlineColor ("Outline Color", Color) = (0, 0, 0, 1)

_OutlineWidth ("Outline Width", Range(0, 0.1)) = 0.03

}

Subshader {

Tags {

"RenderType" = "Opaque"

}

CGPROGRAM

#pragma surface surf Standard fullforwardshadows

Input {

float4 color : COLOR

}

half4 _Color;

half _Glossiness;

half _Metallic;

void surf(Input IN, inout SufaceStandardOutput o) {

o.Albedo = _Color.rgb * IN.color.rgb;

o.Smoothness = _Glossiness;

o.Metallic = _Metallic;

o.Alpha = _Color.a * IN.color.a;

}

ENDCG

Pass {

Cull Front

CGPROGRAM

#pragma vertex VertexProgram

#pragma fragment FragmentProgram

half _OutlineWidth;

float4 VertexProgram(

float4 position : POSITION,

float3 normal : NORMAL) : SV_POSITION {

position.xyz += normal * _OutlineWidth;

return UnityObjectToClipPos(position);

}

half4 _OutlineColor;

half4 FragmentProgram() : SV_TARGET {

return _OutlineColor;

}

ENDCG

}

}

}

The first part of this shader (the CGPROGRAM block outside of a

Pass block) is a basic surface shader. That’s right: this is the part that

renders the object itself, and it’s so irrelevant to this technique that

it can even be done with a surface shader. The only important thing

about this phase of the rendering is that we write to the depth buffer,

which a surface shader will always do.

The outline pass

Next, inside our Pass block, is the part that actually draws the

outline, and there are really only two

important lines that make this magic work:

Cull Front

This line causes us to render only the faces of the mesh that are facing away from the camera, most of which will be obscured by the object we drew in the first phase.

position.xyz += normal * _OutlineWidth;

This line translates the position of each vertex along its normal a

short distance, as specified by the _OutlineWidth property.

This is exactly what the artists on XIII did inside their modeling package, we’re just automating it inside a vertex shader.

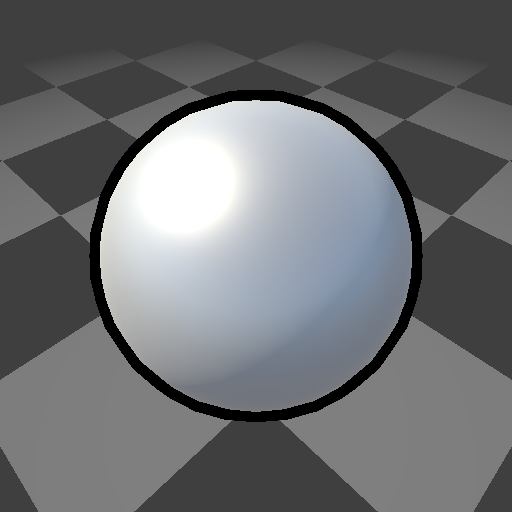

| Property | Value |

|---|---|

_Color |

(255, 255, 255, 1) |

_Glossiness |

0.5 |

_Metallic |

0.5 |

_OutlineColor |

(0, 0, 0, 1) |

_OutlineWidth |

0.03 |

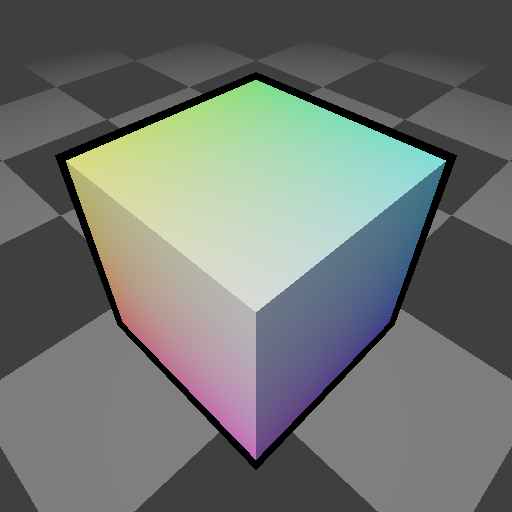

Here’s our classic outline shader applied to a sphere. Looks great!

Unfortunately, a sphere is sort of cheating. It has super smooth normals, no sharp angles, and all its vertices are positioned equidistant from the object center (the definition of a sphere!).

That means we didn’t even need to translate the vertex positions of our outline along the normals. We could have just scaled them.

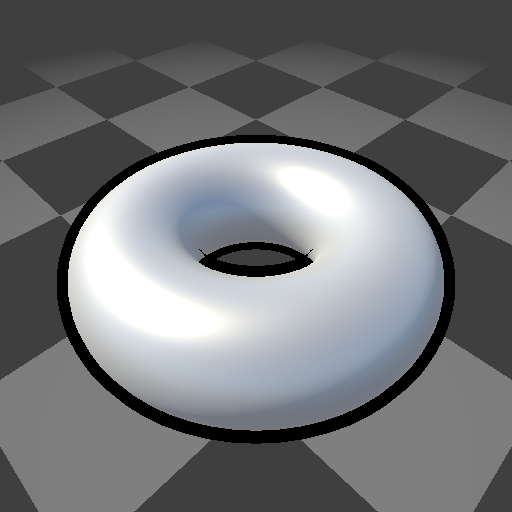

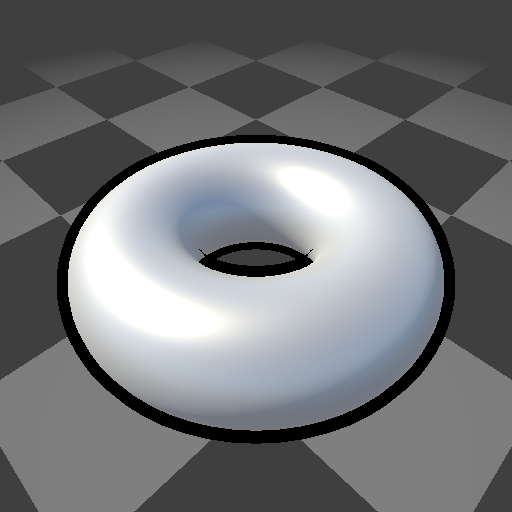

| Property | Value |

|---|---|

_Color |

(255, 255, 255, 1) |

_Glossiness |

0.5 |

_Metallic |

0.5 |

_OutlineColor |

(0, 0, 0, 1) |

_OutlineWidth |

0.03 |

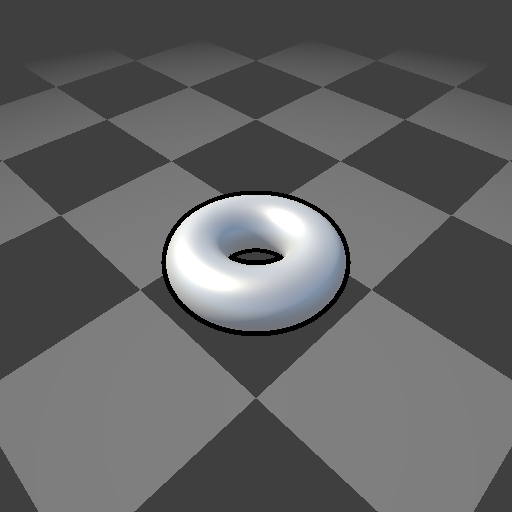

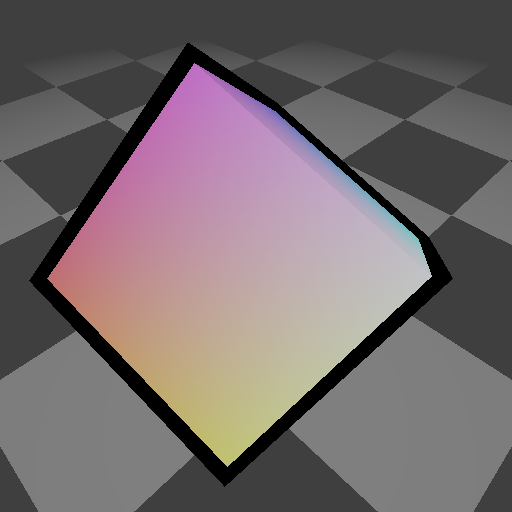

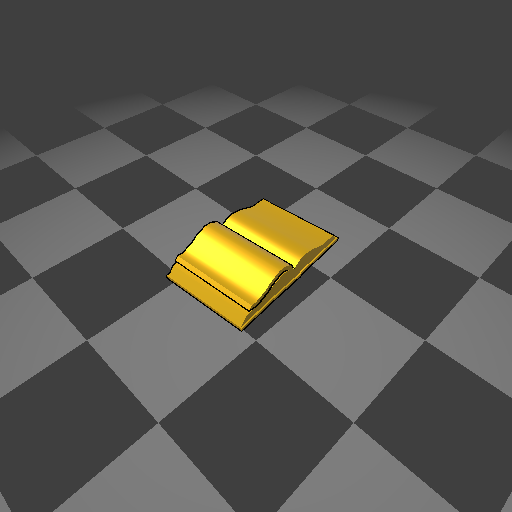

A torus gives a better view of what’s going on. See how the outline reaches in to the hole in the center of the object because the normals there are facing inward.

Scaling and foreshortening

Because we’re translating our vertex positions in object space, any scaling that occurs on our way to world space, and any foreshortening caused by perspective division, will impact the apparent width of the outline.

| Property | Value |

|---|---|

| Scale | |

| Camera distance |

| Property | Value |

|---|---|

| Scale | |

| Camera distance |

| Property | Value |

|---|---|

| Scale | |

| Camera distance |

As we work to improve our control over the shape of our outlines, with the ultimate goal of achieving pixel perfection, we’ll need to learn to counteract the effects of scaling and foreshortening.

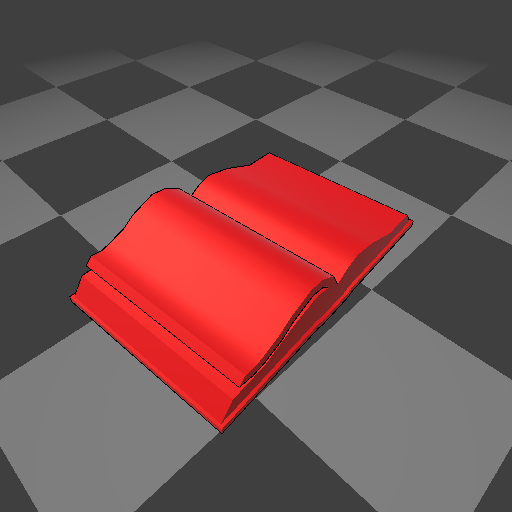

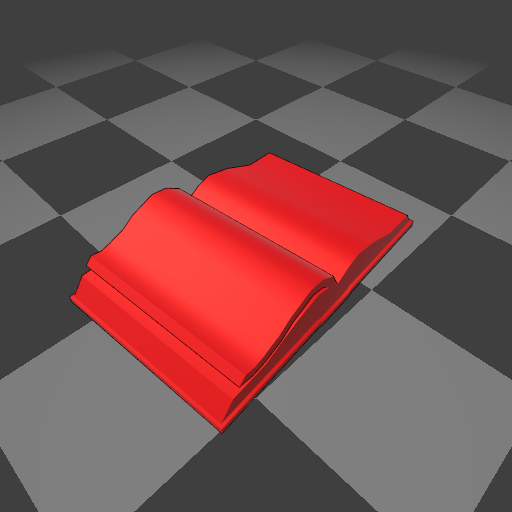

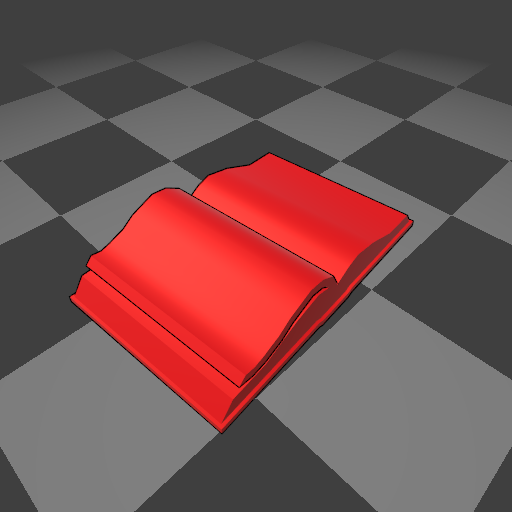

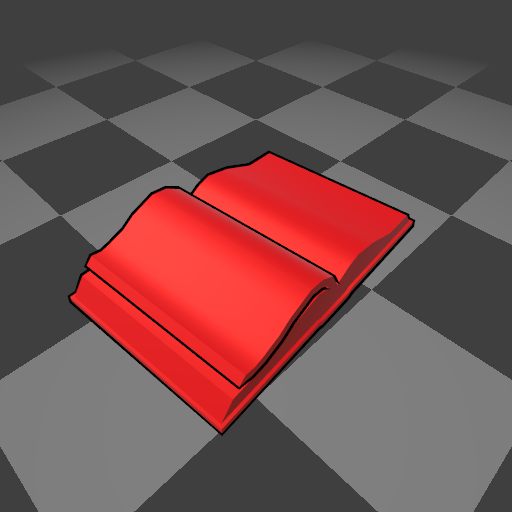

Handling sharp edges

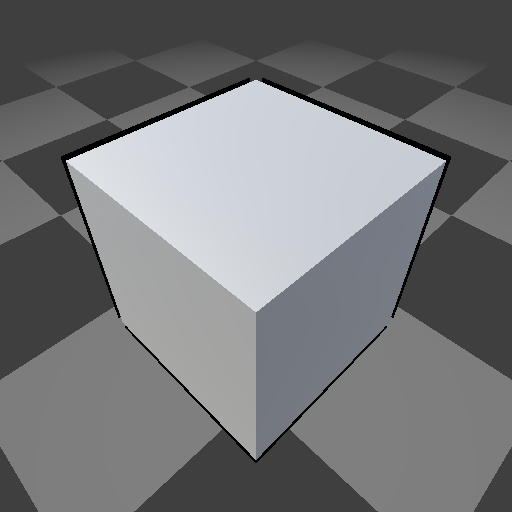

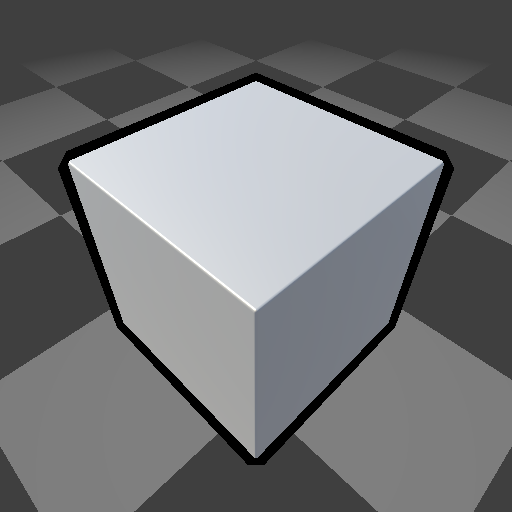

This normal offset technique breaks down on objects with sharp edges. Before we continue refining this technique, we need to find ways to mitigate this issue.

| Property | Value |

|---|---|

_Color |

(255, 255, 255, 1) |

_Glossiness |

0.5 |

_Metallic |

0.5 |

_OutlineColor |

(0, 0, 0, 1) |

_OutlineWidth |

0.03 |

Sharp edges in 3D meshes are achieved by duplicating vertices along the edge. Each side of this cube has four unique vertices with normals oriented perpendicular to those of adjacent sides. When we translate these vertices’ positions along their normals, it’s equivalent to exploding the sides of the cube.

One way to address this problem is to make the edges of the cube smooth, and bevel them. This will impact the look of the cube itself.

Another option is to use a separate mesh that has smooth normals exclusively for drawing the outline. This requires us to author or generate in a script a separate mesh for each mesh that has sharp edges that we’d like to outline. (We could also use these meshes for optimized collisions and shadow casting.)

Finally, we can store smooth normal data in another channel of the mesh we aren’t using, e.g. in the vertex colors.

How you handle this is up to you, and may vary case by case. It will affect the structure of your shaders, but not the specific algorithms we’ll be using to manipulate the outline shapes.

Grazing angles

One final thing I want to point out about this classic technique is that the thickness of the outline can vary not just based on the scale and distance of the object, but on the angle it’s viewed at.

This difference in width is not attributable to just foreshortening, although we are seeing some foreshortening effects.

Because we are extruding the outline in three dimensions along the normal, some of the outline’s width is being used to travel toward or away from the camera, which doesn’t affect the outline’s apparent thickness.

For inky outlines like XIII’s graphic novel-inspired art, this variation in outline thickness adds to the illusion that the outlines are hand-drawn.

But when we’re using outlines as user interface elements (e.g. to show which objects are selected), or to achieve the look of a mechanical drawing, this variation is undesirable. We need to learn how to control it.

Working in clip space

Our first evolution of the classic outlining technique is to transform our vertex positions and normals into clip space before translating our vertex positions.

This allows us to bypass the model transformation to world space, thus counteracting any object scaling (as long as we normalize our normal after the transformation).

This may not seem that useful. After all, scaling objects in Unity is not exactly a best practice. However, it also sets us up to bypass perspective foreshortening later.

float4 VertexProgram(

float4 position : POSITION,

float3 normal : NORMAL) : SV_POSITION {

float4 clipPosition = UnityObjectToClipPos(position);

float3 clipNormal = mul((float3x3) UNITY_MATRIX_VP, mul((float3x3) UNITY_MATRIX_M, normal));

clipPosition.xyz += normalize(clipNormal) * _OutlineWidth;

return clipPosition;

}

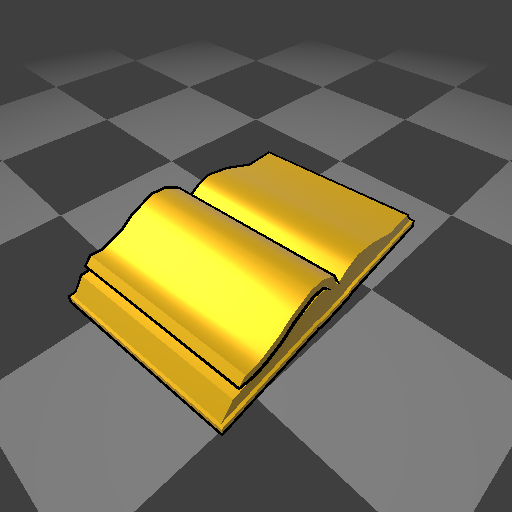

| Property | Value |

|---|---|

_Color |

(255, 195, 0, 1) |

_Glossiness |

0.5 |

_Metallic |

0.5 |

_OutlineColor |

(0, 0, 0, 1) |

_OutlineWidth |

0.015 |

Now that we’re in clip space, scaling our object does not affect the relative width of our outlines, although they are still foreshortened by perspective

| Property | Value |

|---|---|

| Scale | |

| Camera distance |

| Property | Value |

|---|---|

| Scale | |

| Camera distance |

| Property | Value |

|---|---|

| Scale | |

| Camera distance |

Extruding in two dimensions

The logical next step after moving to clip space is to start working in two dimensions. Remember, in clip space, our position’s and components correspond to the vertex’s horizontal and vertical placement on screen.

By extruding in only two dimensions, our _OutlineWidth

property will allow us to specify a portion of the screen

we want our outlines to take up, rather than a three

dimensional distance from the surface of our object. It

takes a one step closer to having direct artistic control

over the output of our shader, not just the input.

float2 offset = normalize(clipNormal.xy) * _OutlineWidth;

clipPosition.xy += offset;

By normalizing just the and components, we’re ensuring

that our offset takes up exactly _OutlineWidth of clip

space (which is not quite screen space). This should greatly

reduce the amount of variation in outline width at grazing

angles, but it still won’t mitigate foreshortening.

Eliminate foreshortening

How exactly is foreshortening happening? We aren’t foreshortening

our offset in the vertex shader; it should be exactly equal in

length to _OutlineWidth regardless of the distance from

the camera. So why is it getting smaller?

In fact, all foreshortening occurs after the vertex shader, in a part of the graphics pipeline we have no control over. In a step called “perspective division,” the and components of every vertex position, including our outlines, are divided by their component. Since is at the center of the screen, this means larger values cause our positions to move closer to the center of the screen (and thus appear smaller and farther away).

The component itself is populated by the projection transform. When we use an orthographic projection, the component is constant, but when we use a perspective projection, it increases dependent on the camera-space component (distance from camera).

It’s essential to proper projection, so we can’t eliminate the component entirely. Instead, we’ll need to counteract perspective division (division by ) by premultiplying our offset by .

float2 offset = normalize(clipNormal.xy) * _OutlineWidth * clipPosition.w;

clipPosition.xy += offset;

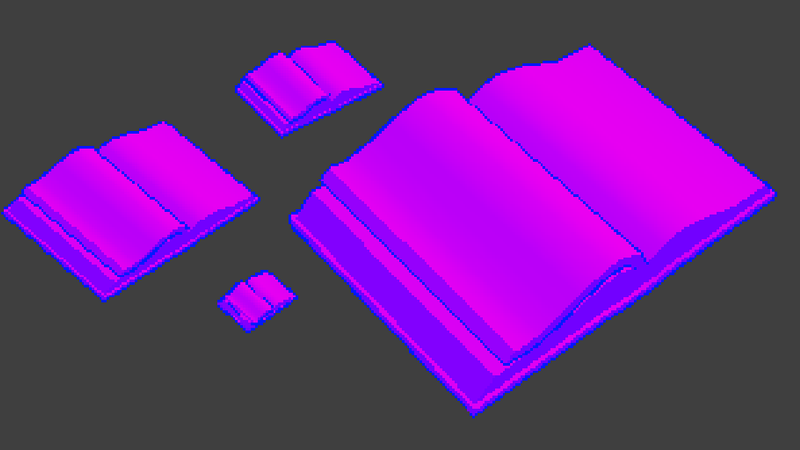

If we multiply the clip-space position by instead of just the offset (or if we set to ), our book will remain the same size on screen regardless of how far from the camera it is. A neat trick to remember!

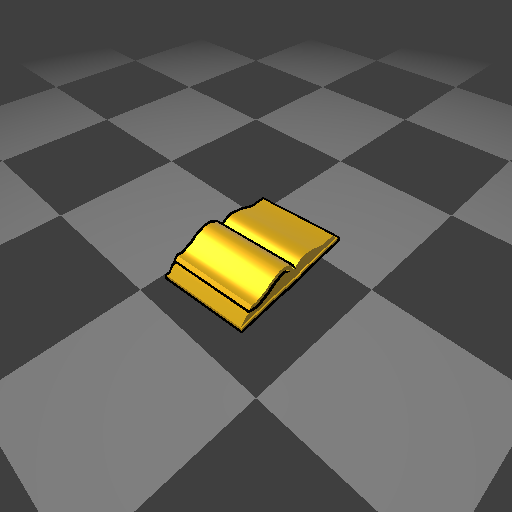

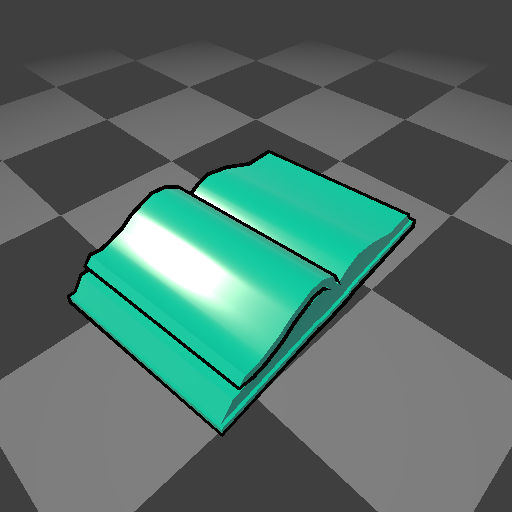

| Property | Value |

|---|---|

_Color |

(0, 210, 167, 1) |

_Glossiness |

0.75 |

_Metallic |

0 |

_OutlineColor |

(0, 0, 0, 1) |

_OutlineWidth |

0.015 |

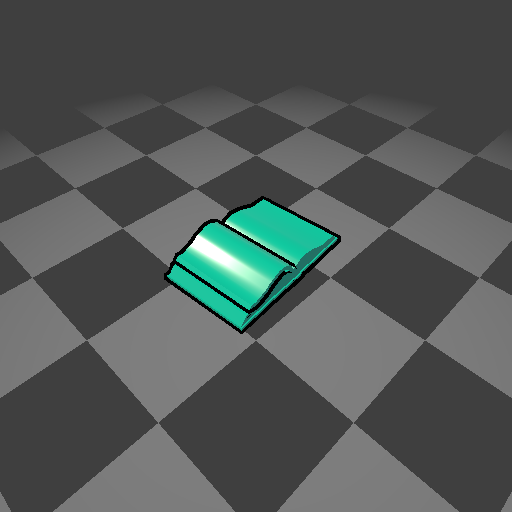

Now that we’ve mitigated foreshortening, we’re truly

operating in screen space. Our _OutlineWidth now

correlates directly to the amount of our screen that

the outline will cover (where 1 is 50% of the screen).

| Property | Value |

|---|---|

| Camera distance |

| Property | Value |

|---|---|

| Camera distance |

Accounting for aspect ratio

So if an _OutlineWidth of 1 is equivalent to

50% of our screen, we have a problem! Most of the

time, our screen’s width and height are not the

same size.

If our screen is wider than it is high (which is usually the case), then our outlines will be thicker horizontally than they are vertically.

That’s cool if we’re going for a calligraphic look, although we’d probably want more precise control over it than leaving it up to the user’s aspect ratio.

However, normalizing our offset based on the screen dimensions brings us so close to being able to specify our outline width in pixels, that we may as well just do that.

Pixel perfection

We need to divide our offset’s and components

by the screen width and height, respectively. Unity

makes these available to us as the and components

of the _ScreenParams variable. Now our _OutlineWidth

will be a measure of our outline’s width in pixels.

float2 offset = normalize(clipNormal.xy) / _ScreenParams.xy * _OutlineWidth * clipPosition.w;

Not so fast! In clip space, our and coordinates

range from to . After perspective division,

these will be to , for a total range of .

In order for an _OutlineWidth of 1 to equal 1 pixel,

we’ll need to divide our screen width and height by ,

or multiply our offset by (probably simpler).

float2 offset = normalize(clipNormal.xy) / _ScreenParams.xy * _OutlineWidth * clipPosition.w * 2;

Floating point division is expensive. Bonus points for precalculating

the reciprocals of the screen width and height and premultiplying

them by the outline width in pixels on the CPU, then passing that

vector (we still need separate and values) in place of the

_OutlineWidth property. This could be done in a custom shader

GUI.

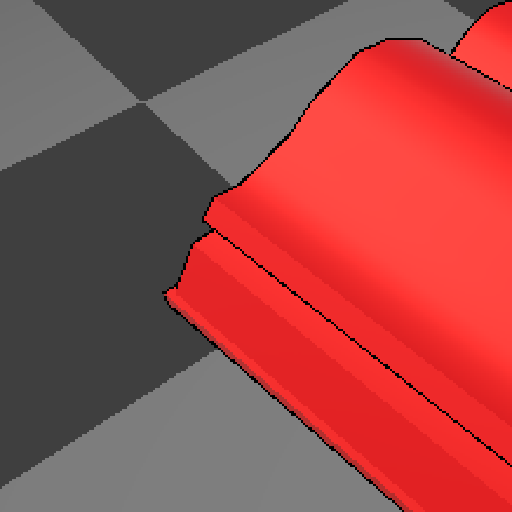

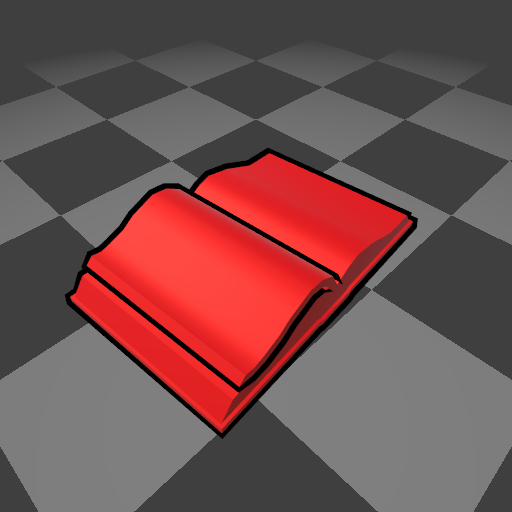

| Property | Value |

|---|---|

_Color |

(255, 0, 0, 1) |

_Glossiness |

0.5 |

_Metallic |

0.25 |

_OutlineColor |

(0, 0, 0, 1) |

_OutlineWidth |

1 |

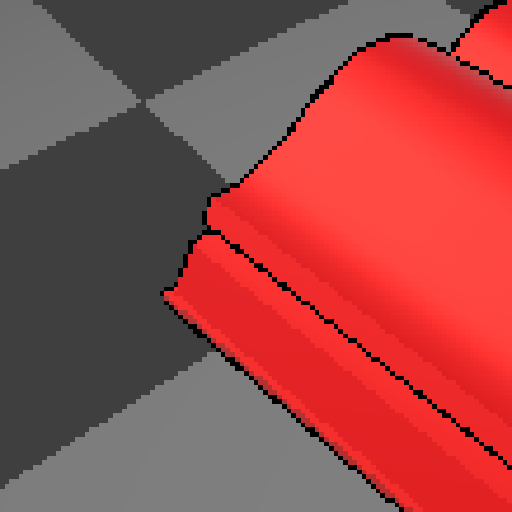

Here it is, our holy grail, the ultimate level of artistic control over the output of our shader. Pixel-perfect screen space outlines. With anti-aliasing turned off, we can see how crispy they are.

| Property | Value |

|---|---|

| Resolution |

| Property | Value |

|---|---|

| Resolution |

| Property | Value |

|---|---|

| Resolution |

The rasterizer isn’t going to end up placing pixels exactly as a talented pixel artist would, but for blending dynamic 3D meshes with pixel art sprites, it’s close enough.

| Property | Value |

|---|---|

| Supersampling | |

_OutlineWidth |

1 |

| Property | Value |

|---|---|

| Supersampling | |

_OutlineWidth |

1.5 |

With anti-aliasing turned on, we get lovely, precise

vector art outlines. I really like the heft of 1.5 pixels

here.

| Property | Value |

|---|---|

| Supersampling | |

_OutlineWidth |

3 |

| Property | Value |

|---|---|

| Supersampling | |

_OutlineWidth |

6 |